.png)

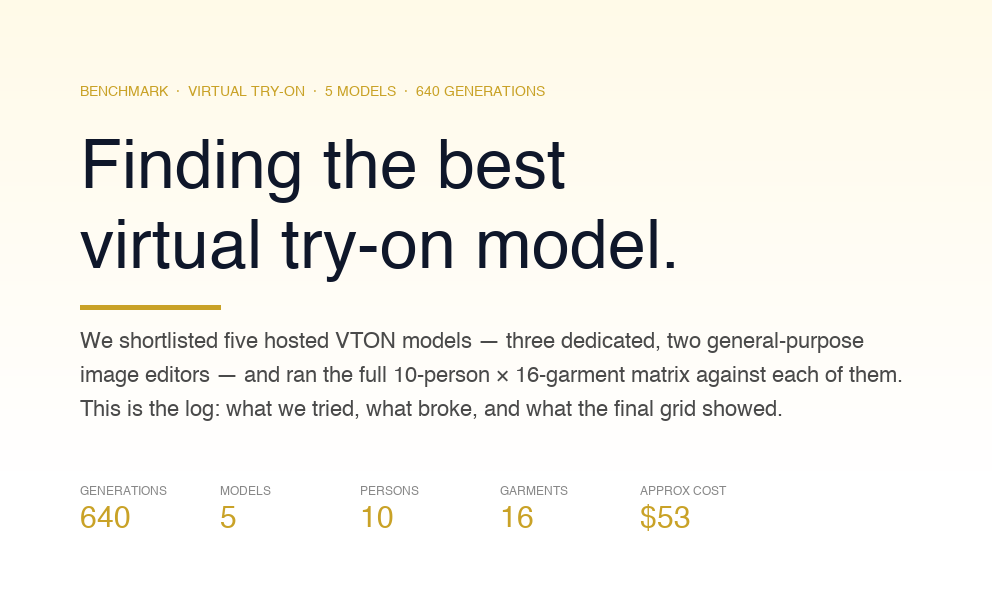

How to deploy a virtual try-on feature to production

For teams shipping VTON this quarter: model selection criteria, infrastructure decisions, production failure modes, and the observability that makes next month’s debugging session start from data.

This is the follow-up to Part 1, where we benchmarked five hosted virtual try-on models across 640 generations — 10 people, 16 garments, controlled and uncontrolled conditions. The benchmark’s core finding: route by garment category. Dedicated VTON models handle the structured cases; a general-purpose editor covers the long tail. There is no single winner.

This post covers everything after that decision — how the request flows, how to configure infrastructure for real load, the failure modes we hit in production, and what to measure so your second month starts from data. We’ve deployed this exact system at Ionio VTON. What follows is the playbook.

This post assumes

You’ve chosen hosted inference over self-training. You’re building a try-on feature in a retail or e-commerce product on a timeline of weeks. The benchmark and quality findings are in Part 1. This post is the deployment architecture: how requests flow, how failures are handled, what to measure.

Four decisions that drive everything else

- Which models, and how do you pick? The benchmark gives you the data. This section gives you the decision framework.

- How does the client talk to the model? A thin proxy you own, never direct calls to the provider.

- How does the proxy route each request? Category and pose aware, with a primary model and an automatic fallback.

- What do you record on every call? Enough to reconstruct exactly what happened when something breaks two weeks from now.

Every section below maps to one of these decisions.

Decision 1: Model lineup

.png)

The five models, production-assessed

ModelLicenseHostingCost (approx.)Warm latencyStrengthCatVTONNon-commercial researchSelf-hosted (RunPod)~$0.01–0.03/call15–30sNon-standard posesKlingCommercial (fal.ai)Fully hosted~$0.07/call20–35sIdentity preservationFASHNCommercialFully hosted~$0.05–0.08/call25–45sOne-pieces, complex fabricsQwenCommercialFully hosted~$0.03–0.05/call15–25sCost-efficient standard garmentsNano Banana ProCommercialFully hosted~$0.04–0.07/call30–60sLong-tail flexibility, edge cases

CatVTON — non-commercial license. This is the first thing to resolve before putting CatVTON in a production stack. The weights are available on HuggingFace under a non-commercial research license. For consumer-facing commercial products, that’s a legal blocker, not a technical preference. Either obtain a commercial license separately or replace this slot with a pose-capable alternative. Technically, it’s the strongest model in the benchmark for non-standard poses — nothing else handles sitting, back-facing, or lying-down compositions as reliably. The license issue is independent of the technical case; resolve it with your legal team before the integration is in progress.

Kling delivers the best identity preservation in the benchmark — face, body proportions, and skin tone carry through accurately. It’s the most expensive per call and on the slower end. Where photorealism and identity fidelity are the top requirements, it’s the right primary. Tops and standard front-facing shots are its strongest category.

FASHN is mandatory if your catalog includes formalwear, eveningwear, or garments with complex fabric structure — sequins, silk, satin, structured one-pieces. The other four models either flatten these textures or lose the silhouette. FASHN handled sequin drape and silk sheen convincingly in our benchmark; nothing else came close. If your catalog is entirely basics and standard tops/bottoms, FASHN is optional. If it includes any significant formalwear, it’s required.

Qwen has the best cost-to-quality ratio for standard garments. Fastest dedicated VTON in our stack. Where the catalog skews toward simple tops and bottoms and poses are controlled, Qwen handles the majority of traffic cheaply and consistently. Its minimum input dimension is 512×512 — more on that in the failure modes section.

Nano Banana Pro is the general-purpose editor, the most flexible model in the lineup. It produces more output variance than the dedicated VTON models and handles long-tail prompts, unusual compositions, and creative inputs better than any of the others. Its content classifier blocks more legitimate requests than the dedicated models do — but those blocks are manageable with a proper fallback chain and dead-letter logging. See the full 10 × 16 grid — the variance speaks for itself.

How to pick your starting stack

Two questions determine your lineup.

What does your catalog look like?

- Mostly tops and bottoms with standard photography → Qwen as primary (cost efficiency), Kling as secondary for quality-critical SKUs. This pair covers ~80% of standard catalog traffic.

- Significant formalwear, one-pieces, or structured garments → FASHN is a required third member. The pair above can’t cover it reliably.

- Broad catalog with unknown distribution → Start with Kling + Nano Banana Pro and add FASHN once production data surfaces the formalwear failure rate.

What are your users’ photos like?

- Controlled submission (you prompt users for a front-facing shot) → Non-standard poses are rare; the general-editor fallback handles the outliers.

- Uncontrolled photos (selfies, lifestyle shots, arbitrary angles) → The pose-outlier branch handles a meaningful fraction of traffic. CatVTON is the correct model here, but factor its license in. If commercial use is off the table, Nano Banana Pro as the pose-fallback produces acceptable results with higher variance.

Minimum viable stack: one dedicated VTON model (Kling or Qwen) and one general editor (Nano Banana Pro). Add models when production data shows a category failing systematically — not speculatively.

For the empirical quality data behind these recommendations — per-model success rates by garment category, identity preservation scores, failure mode breakdowns — see Part 1.

Decision 2: The proxy

The client never talks to a model provider directly. A thin proxy in your serverless framework — about 20 lines per provider — is the right architecture for three reasons: API keys stay server-side and out of the browser bundle (fal.ai and RunPod keys both have unlimited blast radius if exposed), provider-specific protocols are encapsulated so the client has a single consistent interface regardless of which model was called, and logging, rate limiting, caching, and retry logic all live in one place.

// /api/fal.js — Vercel route

export default async function handler(req, res) {

if (req.method !== 'POST') {

return res.status(405).end();

}

const { endpoint, body } = req.body;

try {

const r = await fetch(`https://queue.fal.run/${endpoint}`, {

method: 'POST',

headers: {

Authorization: `Key ${process.env.FAL_KEY}`,

'Content-Type': 'application/json',

},

body: JSON.stringify(body),

});

if (!r.ok) {

return res.status(r.status).json({

error: await r.text(),

});

}

const data = await r.json();

return res.status(200).json(data);

} catch (err) {

return res.status(500).json({

error: err.message,

});

}

}The RunPod proxy is structurally identical but polls the /status endpoint until completion rather than streaming. Store API keys in your hosting platform’s environment variables (Vercel → Project → Settings → Environment Variables, scoped per environment). Rotate same-day if a key appears in a log, chat, or screenshot.

RunPod worker configuration for production

CatVTON runs on RunPod Serverless. The default configuration is tuned for demos; it needs adjustment before production.

Four parameters control worker lifecycle:

Active Workers — Workers that stay provisioned and warm regardless of traffic. At 0, every request from a cold state pays the cold-start penalty (15–40s model load before inference begins). At 1, the primary model is always ready. Set active workers to match your average concurrent load during peak hours, not your peak. An idle A100 worker costs roughly $0.74/hr on RunPod spot pricing — ~$18/day — which is marginal against the conversion impact of a 60-second cold start on a user’s first try-on.

Max Workers — The ceiling on concurrent parallel workers. RunPod scales up to this limit under load and back down during quiet periods. A single worker handles one inference request at a time. Set max workers to your estimated p99 concurrent request count — not average, p99.

GPUs per Worker — GPU allocation per worker slot. CatVTON at half-precision requires at minimum one A100 80GB or L40S. Increasing GPUs per worker does not improve single-request throughput for this workload. Keep it at 1.

Idle Timeout — How long a worker stays alive after completing its last request before RunPod scales it down. The default is 5 seconds. In practice: a user who generates one try-on has a high probability of generating another within the next 30–120 seconds — trying a different garment, adjusting, retrying. At a 5-second timeout, that second request hits a cold start. Set idle timeout to 90–300 seconds. The cost of keeping one warm worker alive for an extra 5 minutes is negligible; the latency cost of a cold start on the retry is not.

RunPod worker settings — tune Active Workers, Max Workers, Idle Timeout for production traffic shape

Recommended starting configuration: 1 active worker, 3–5 max workers, 1 GPU per worker, 120s idle timeout. Tune from there once you have traffic shape data from the first week.

Decision 3: The router

.png)

The routing function is nine lines. The work is in the benchmark that informed it.

def pick_models(garment, person):

category = garment.get("category") or infer_category(garment)

if person.get("pose") in ("sitting", "lying", "back"):

return ("catvton", "nano_banana_pro")

if category == "tops":

return ("kling", "qwen")

if category == "bottoms":

return ("qwen", "kling")

if category == "one-pieces":

return ("fashn", "nano_banana_pro")

return ("kling", "qwen") # defaultEvery path returns a (primary, fallback) tuple. The proxy calls the primary; on recoverable failure — timeout, 5xx, transient content-policy block — it retries against the fallback automatically.

Category-only routing handles 80–90% of requests correctly. Two additional routing dimensions are worth adding once production data justifies them:

Fabric detection. FASHN dramatically outperforms the other models on sequins and silk. A lightweight fabric classifier lets you route sequin tops to FASHN even when they’re categorized as “tops.” Cost: ~200ms per call. Add this when production logs show FASHN consistently outperforming the router’s primary on specific fabric types.

Pose estimation. The pose branch above assumes you have pose metadata. If user photos are uncontrolled, run a lightweight pose estimator on the person image and bucket into standing / sitting / lying / back. Cost: ~300ms per call. Add this when the fallback chain is absorbing a meaningful fraction of pose-related failures and those failures show up in thumbs-down rates.

Add neither speculatively. Add them when the category-only router’s per-category failure rate surfaces a specific problem in your dashboards.

Decision 4: What you log

Structured per-call JSONL. One entry per inference call. Same schema across all models. This is the most under-invested part of most VTON deployments.

{

"ts": "2026-04-25T10:32:41Z",

"request_id": "r_01hw4xn9v",

"user_id": "u_anon_2f9c1",

"primary_model": "kling",

"fallback_used": null,

"category": "tops",

"pose": "standing",

"status": "ok",

"duration_ms": 24183,

"upload_ms": 3102,

"model_ms": 19981,

"download_ms": 1100,

"cost_usd": 0.07,

"input_hash_p": "sha256:...",

"input_hash_g": "sha256:...",

"user_feedback": null

}What each field does:

request_id is the join key between frontend logs, proxy logs, and error reports. Without it, debugging a specific user complaint is archaeology.

primary_model and fallback_used give you routing-level stats — how often each model fires, how often the fallback saves the call. This is how you detect when a model’s reliability degrades before it shows up in user complaints.

duration_ms broken into upload / model / download shows where latency is actually spent. The difference between “the model is slow” and “we’re uploading 8 MB JPEGs on every call” shows up here and nowhere else.

input_hash_p and input_hash_g are SHA-256 of the person and garment bytes. The hashes let you detect duplicate submissions (user retrying) and build a content-hash cache without storing any images. You never need to store the originals to get cache hit data.

user_feedback is the cheapest labeled dataset you will collect. Show a thumbs up / thumbs down control in the result UI — inline next to the output, not buried in a survey flow. Write 1 for up, -1 for down, leave null for no response. At 30% response rate (typical for a well-placed inline prompt), 1,000 try-ons per month produces 300 labeled outputs. Cut thumbs-down rate by model and by category: within weeks, you’ll see which (model, category) pairs are producing dissatisfied users without having to manually review a single output.

Pipe this JSONL to your existing observability stack — Grafana, Datadog, Axiom, S3 + Athena. The schema is the load-bearing part; the destination is interchangeable.

Production failure modes

.png)

We recovered 67 failures out of 705 calls across the benchmark — about 10%. Most of them will happen to you. Here’s what to build for each, in order of how likely you are to hit them.

Image-size validators

Every hosted provider caps input dimensions. Qwen’s minimum is 512×512; fal’s general maximum is 2048×2048; individual endpoints differ. Handle this client-side before the upload happens: check dimensions, downscale anything over 2048 with Lanczos, upscale anything under 640. 640 is the floor — sitting at a provider’s exact validation limit means the first time they tighten validation, edge cases break. Re-encode as JPEG at quality 92. Users never see an image dimension error because the problem is resolved before the upload.

Category-name mismatches between providers

FASHN takes "one-pieces", not "dresses". CatVTON takes "upper" / "lower" / "overall". Kling ignores category entirely. A single CATEGORY_MAP per provider prevents this entire class of bugs:

FASHN_CATEGORY = {

"tops": "tops",

"bottoms": "bottoms",

"one-pieces": "one-pieces"

}

CATVTON_CATEGORY = {

"tops": "upper",

"bottoms": "lower",

"one-pieces": "overall"

}Category names differ by provider. Read each provider’s API reference on first integration.

Cold starts on RunPod

The first request to a freshly-provisioned worker can return None because model weights are still loading. Two mitigations: fire a lightweight pre-warm ping from the client when the user opens the try-on UI (by the time they’ve uploaded their photo, the worker is warm), and implement a single retry in the RunPod proxy when the response body matches the cold-start signature. The retry is safe — the request is idempotent and cold starts happen at most once per worker provisioning event.

The RunPod configuration section above is the upstream fix: active workers and a tuned idle timeout eliminate cold starts for warm-path traffic entirely.

Safety-filter blocks on general editors

Nano Banana Pro and similar general-purpose editors carry content classifiers that block legitimate garment requests — typically form-fitting or revealing garments. When a safety-filter block occurs, the proxy routes the request to a fallback model without the classifier (CatVTON, FASHN, Kling — none carry the same content filter), logs the block to a dead-letter queue, and returns the fallback’s output to the user.

The dead-letter queue is the point. A weekly review of blocked-but-legitimate requests is the dataset for tuning routing rules: if a specific garment type hits the filter on the general editor at a consistent rate, move those requests to a dedicated VTON model permanently. The log tells you when that threshold is crossed.

Silent timeouts and network flakes

1–2% of long-running requests die mid-flight with no useful error — a network blip, connection pool exhaustion, a timeout somewhere in the pipeline. Set a 90-second timeout in the proxy. Surface it to the user with a clear message and a one-click retry. Users tolerate a clear “please try again” far better than an opaque spinner at the same latency.

Latency: the budget and how to spend it

Users bounce after ~60 seconds. Models run 15–90 seconds depending on model and cold-start state. That means the latency budget is already tight.

Upload person image and garment image concurrently. This is a five-line change that saves 3–5 seconds on every call. Sequential uploads are the single most common fixable latency waste in VTON deployments.

Pre-warm the hot path. If your traffic concentrates on one model (common when catalogs are category-skewed), send a keepalive ping every 90 seconds during business hours to maintain one warm worker. RunPod pricing makes the idle cost marginal at this frequency.

Name the current phase in the UI. “Uploading → Sending to CatVTON → Processing (30–60s) → Downloading.” This is a perception change, not a performance change. Users who understand the task duration don’t abandon at the midpoint the way they do with an opaque spinner.

What to measure in the first month

Build these dashboards on day one. Without them, you can’t tell if the deployment is performing or degrading.

Per-model p50 / p95 / p99 latency. The p99 is where users churn. The median is where you feel good about performance. Watch both.

Per-category success rate. Tops, bottoms, one-pieces — which categories fail more than others? Systematic category failures are routing signals, not random noise.

Cost per successful try-on. Cost per call is the wrong denominator. Cost divided by successful outputs is what matters. A model at $0.07/call with a 5% failure rate has an effective cost of $0.074 per success. This is the number finance cares about.

Thumbs-up rate by (model, category). Per-model latency tells you about performance; thumbs-up rate tells you about quality from the user’s perspective, which is the only perspective that matters for retention. A model with good p95 latency and a 40% thumbs-down rate on bottoms is not a good primary for bottoms, regardless of what the benchmark says. User feedback is the ground truth; the benchmark is the prior.

Dead-letter queue depth. How many calls land in the content-policy bucket per week. A growing queue means the primary models’ safety filters are increasingly mismatched to your actual user content — a routing adjustment signal.

Five metrics. Wire them to Grafana Cloud or Datadog; the queries are straightforward from the JSONL schema. Worth every minute before you ship.

When to add complexity

The minimum viable deployment: proxy, router, structured logging, and the five dashboards above. Everything else is opt-in, triggered by what the observability surfaces.

Caching — when the input-hash repeat rate crosses ~15%. Serve cached successful outputs by person+garment hash from S3/R2 instead of regenerating. Engineering cost: ~1 day. Impact: proportional reduction in inference spend. Highest ROI item on this list.

Fabric classifier — when production logs show FASHN consistently outperforming the router’s primary on specific garment types. A lightweight classifier intercepts those requests upstream of the router. Engineering cost: 1–2 weeks (dataset labeling + classifier). Justified only when per-category failure rate and thumbs-down data both indicate a systematic gap that category routing alone can’t fix.

LPIPS-based auto-validation — when user feedback signals identity drift that model failure codes don’t catch. Compute perceptual similarity between the input person and the output image; route to the fallback when the score exceeds a threshold. Adds ~500ms per call. Engineering cost: 2–3 days. Add this when the thumbs-down rate on specific models stays elevated after routing adjustments — it means the primary is generating plausible-looking outputs that don’t match the input person, a failure mode that inference status codes won’t surface.

Additional model provider — when a category consistently fails across the entire current lineup, visible in both per-category success rate and thumbs-down rate. For us, this was formalwear before FASHN was added. The trigger is always data from production, not a hypothesis about what a new model might do.

Every item above costs real engineering time and adds operational surface area. Defer until the dashboards tell you to act.

Checklist for shipping in one week

- [ ] Two model providers chosen — minimum one dedicated VTON + one general editor

- [ ] Proxy routes for each provider (~20 lines each), API keys in hosting platform env vars only

- [ ] RunPod configured: 1 active worker, appropriate max workers, 120s idle timeout

- [ ] Category-aware router with primary + fallback tuple output

- [ ] Client-side upload validation — dimension check, resize to 640–2048, re-encode at JPEG 92

- [ ] Pre-warm ping on page load for providers with cold starts

- [ ] Per-call JSONL logging with request_id, timing breakdown, cost, user_feedback field

- [ ] Thumbs up / thumbs down control in the result UI, wired to per-call log

- [ ] Five dashboards: latency percentiles, success rate by category, cost per success, thumbs-up rate by (model, category), dead-letter depth

- [ ] 90-second proxy timeout with a clear user-facing retry message

- [ ] Progress UI naming the current phase

Check all eleven of these and you’re shipping a VTON deployment that will hold up.

Try the reference deployment

We built and deployed the architecture above — it’s live at Ionio VTON. Upload a person photo and garment, watch the router pick a model, see the result. The app logs which model it routed to on every call, so you can observe the routing logic in production rather than in a diagram.

Work with us

We help retail and e-commerce teams ship production AI — virtual try-on features, product photography pipelines, benchmarks and routing layers, and the deployment architecture that holds it all together. Part 1 was the benchmark. Part 2 is the deployment. If you’re building something similar and want a team that takes production as seriously as the demo, we’d love to hear from you.

Part 2 of the VTON series. Part 1 — the model-by-model benchmark across 640 generations. This post — the practical deployment guide. May 2026.