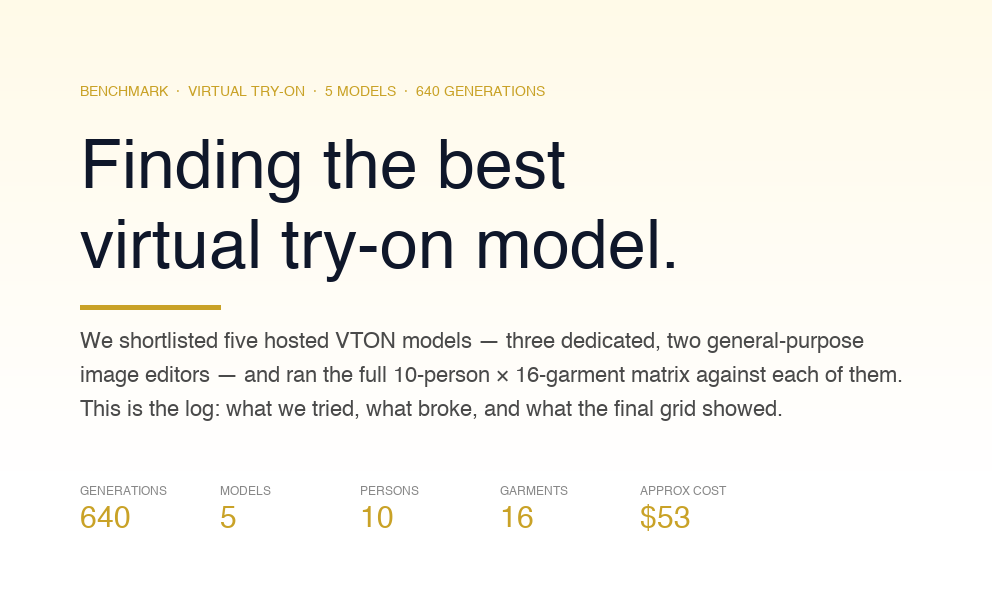

The article explores how GPT and AI can drastically alter the legal sector. Should your legal practice use AI? We'll go into the 8 possible applications, advantages, and disadvantages of traditional. The ethical questions and issues in this dynamic context will also be discussed.

Join us as we explore the convergence of GPT, AI, and the law, and dissect the ways in which these tools have the potential to reshape the way lawyers communicate, cooperate, and disperse justice in today's dynamic global environment.

Should law firms utilize GPT and AI or not?

The result is from a survey of 443 respondents from midsize and large law firms in the U.S., U.K., and Canada, conducted by the Thomson Reuters Institute in March 2023.

More than half of those polled think generative AI and ChatGPT should be utilized in the legal field, yet just 3% of respondents claim they are using it or plan to use it throughout their organization.

The study also shows that many people seek additional information and direction on utilizing generative AI efficiently and ethically due to worries about its accuracy, privacy, and ethical implications.

The high danger associated with generative AI is likely a significant factor in why its potential benefits have yet to be widely adopted. Law firm attorneys and other legal professionals have concerns about the accuracy of generative AI, how it handles confidential data, and, in the case of publicly available tools like ChatGPT, who owns the data and how secure it is.

Most law firm respondents either expressed worry about the potential dangers of using generative AI or admitted they lacked the knowledge to provide an informed opinion. 62% of respondents stated their legal firms were worried about the risks of using generative AI in the workplace, while another 36% indicated they were unsure. Only 2% of respondents from the sample of 157 legal companies claimed their organizations had no worries regarding the use of generative AI or ChatGPT in the workplace, and all of those respondents were from smaller firms.

The wrong way to utilize GPT and AI for law?

Steven A. Schwartz relied on ChatGPT to draft a legal brief with fabricated judicial opinions and citations. A hearing was held to examine sanctions against him and his colleague, Peter LoDuca, and the judge, P. Kevin Castel, questioned him.

- Judge Castel questioned Mr Schwartz about his submitted legal brief, which contained fake judicial opinions and legal citations generated by ChatGPT.

- The judge asked him if he had read the brief before submitting it, to which Mr Schwartz replied that he had not.

- The judge then asked him if he had reviewed the brief for accuracy, to which Mr Schwartz replied that he had not.

- The judge then asked him if he had reviewed the brief for authenticity, to which Mr Schwartz replied that he had not.

- The judge then asked him if he had reviewed the brief for compliance with the rules of professional conduct, to which Mr Schwartz replied that he had not.

After hearing Mr Schwartz's explanation, the judge stated he was still considering issuing sanctions against him and his co-defendant. They also warn that attorneys should exercise caution and responsibility when employing A.I. for legal work because of the inherent risks.

Challenges of managing day-to-day legal work

Time-Consuming

When dealing with many papers, the manual examination can be lengthy. It may take a lot of time for lawyers to go through piles of paperwork searching for helpful information or red flags that could lead to legal trouble.

Human Error

Manual document review relies on humans, who make mistakes. Due to the monotony of the work, errors or omissions are more likely to occur due to a lack of focus or tiredness. Even the most careful attorneys can overlook evidence that might significantly influence their client's case.

Inconsistency

Inconsistencies in judgment and decision-making might occur when many lawyers analyze the same documents. Inconsistencies in analysis and conclusions may result from divergent readings of papers and subjective evaluations of their significance or legal consequences.

Costly and Resource-Intensive

Manual document review may be time-consuming and labor-intensive for legal firms and their clients. Budgets can be strained, and other important work put on hold if lawyers or legal personnel are brought in to evaluate documents.

Scalability

Manual document inspection becomes increasingly difficult when dealing with massive volumes of data, like in large-scale litigation or complicated transactions. Manually reviewing and analyzing hundreds, let alone millions, of papers, quickly can be challenging.

Safeguarding Privacy

Maintaining data privacy and security during human document inspection might be difficult. When numerous people access the same digital or physical material, the likelihood of a security breach or illegal viewing increases.

Debates about the ethics of AI in law

The subject of bias is crucial to the discussion of the morality of AI in the legal context. Algorithms and machine learning enable AI systems to process information and provide predictions. However, the resulting AI may be prejudiced if biased data is utilized to train these systems. This is especially problematic in the legal field, where the choices of judges and attorneys can have far-reaching consequences for the lives of their clients.

The issue of who should be held responsible is also highly contentious. Who is accountable if an AI makes a mistake or gives an unjust result? Who is more accountable for a system's functionality, creators or users? This is particularly concerning when artificial intelligence systems make judgments with significant legal or ethical implications, such as punishing criminals or selecting employees.

Ethics of Using AI in Law Firms

Governments and legal organizations throughout the globe are working to solve these issues by creating legislation and standards for applying AI in the legal system. Transparency and accountability in the use of AI are addressed, for instance, in the General Data Protection Regulation (GDPR) of the European Union, and the American Bar Association has produced recommendations on the use of AI in the legal profession.

Duty of competence

Rule 1.1 of the ABA Model Rules states that lawyers have a duty to provide competent representation to their clients. This means they must have the necessary legal knowledge, skills, thoroughness, and preparation to handle the case effectively. The rule also emphasizes the importance of staying informed about current technology.

In 2012, the ABA added Comment 8 to Rule 1.1, explicitly stating that lawyers should keep up with changes in the law and its practice, including the benefits and risks associated with relevant technology. While there's no specific requirement for lawyers to use AI in their practice, they should be aware of technologies that can enhance their legal services and benefit their clients.

Lawyers are expected to have a basic understanding of how AI tools work. They don't need to know all the technical details but should comprehend how AI technology produces results. For instance, if a lawyer uses a tool that suggests legal answers, they must understand its capabilities, limitations, and the risks and benefits of relying on its solutions. This ensures that lawyers can effectively evaluate and utilize AI tools to serve their clients' needs.

Duty to communicate

According to ABA Model Rule 1.4, lawyers have a duty to communicate with their clients and discuss how they will achieve the client's goals. This duty includes talking to the client about using AI in providing legal services. Before using AI, the lawyer should get the client's approval, and the client should be fully informed about the decision.

During this discussion, the lawyer should explain the risks and limitations of using AI tools. In some cases, if using AI would benefit the client, the lawyer should also inform the client if they decide not to use AI. This is important because using AI to avoid lowering the costs of legal services might be seen as charging the client an unreasonable fee, which goes against ABA Model Rule 1.5.

Duty of Confidentiality

According to the ABA Model Rule 1.6, lawyers must keep their client's information confidential. This means they must take reasonable steps to prevent accidental or unauthorized disclosure or access to the information related to their client's cases. However, some AI tools may require sharing client information with third-party vendors. In such cases, lawyers must ensure that their client's information is adequately protected.

Lawyers need to communicate effectively with their clients about the use of AI and discuss the confidentiality measures in place. They should ask the AI providers about the type of information that will be shared, how it will be stored, what security measures are in place, and who will have access to it. Lawyers should only use AI tools if they are confident that their client's confidential information will be secure.

Duty to Supervise

According to the ABA Model Rules 5.1 and 5.3, lawyers are responsible for supervising lawyers and nonlawyers who help them provide legal services. This means ensuring that everyone follows the rules of professional conduct. In 2012, the title of Model Rule 5.3 was changed to clarify that it applies to human and non-human (such as AI) assistants. So, lawyers must also supervise the work done by AI systems in legal services and understand the technology well enough to ensure it meets ethical standards. They must ensure the AI's work is accurate and complete and doesn't risk revealing confidential client information.

However, there are specific tasks that current AI technology may need to be more suitable for, and lawyers need to know when to handle those tasks themselves. But they should also avoid not using AI enough, as it can help them serve their clients more efficiently. It's all about finding the right balance. Many lawyers like to have control and pay attention to details in their work, so the real danger might be not using AI enough rather than relying too much on it.

OpenAI’s very own LegalAI

Harvey AI is a generative AI tool created especially for elite law firms. It builds individualized Legal Language Models (LLMs) to address difficult legal problems in any area of law under any legal system. Harvey creates specialized LLMs for top law firms, allowing them to take on the most complex cases in any field under any law system. Harvey AI is based on a modified version of Open.AI's GPT AI and is designed for legal use.

In a Series A investment round headed by Sequoia and incorporating participation from OpenAI and other investors, artificial intelligence (AI) startup Harvey has secured $21 million. Harvey will use the funds to expand its workforce, create innovative AI systems, and partner with legal firms to improve their processes and offerings. Harvey employs OpenAI's GPT-4 language model to generate generative AI models that effectively address legal concerns.

On 15 March 2023, PwC and the AI company Harvey announced a worldwide agreement to provide PwC's Legal Business Solutions experts exclusive access to the AI platform, making them the first Big Four to do so.

Access to this technology will allow PwC to serve its numerous international clients better. The agreement has several significant advantages, including:

With Harvey, PwC experts in more than a hundred countries will have access to cutting-edge generative AI. As a result, PwC's team of over 4,000 legal experts will be better able to provide human-led and technology-enabled legal solutions across a broad spectrum of practice areas, such as contract analysis, regulatory compliance, claims management, due diligence, and more general legal advisory and legal consulting services.

To assist customers in further optimizing their internal legal procedures, PwC will collaborate with Harvey to bring the platform to market. With Harvey's help, PwC plans to build and train its proprietary AI models to produce tailor-made products and services for internal use cases and clients across Legal Business Solutions.

If you are concerned about Privacy head over to our blog where we have covered everything about OpenAI’s Privacy and Security concerns.

How Law firms can use ChatGPT and AI?

We asked ChatGPT first.

Let’s dig deep into the possibilities right now!

Document management and automation

While legal companies increasingly rely on electronic document storage, it faces many of the same problems as paper document storage. While keeping papers organized and easy to access is made easier with electronic records, physical storage space is still needed.

Legal documents, such as contracts, case files, notes, emails, etc., may be stored and organized using AI-driven document management software's tagging and profiling features. With this system for archiving and managing digital data and full-text search, information may be quickly located.

For the sake of version control and security, document management solutions also permit document ID and check-in/check-out capabilities. Document management software may integrate with popular programs like Microsoft Office for even more streamlined file sharing.

By employing intelligent templates, document automation helps law firms save time and effort when drafting legal documents by automatically populating form fields with data from client files. Letters, agreements, motions, pleading, bills, and invoices may all be generated quickly and easily with the help of legal document automation.

Clio provides unlimited storage for legal documents, allowing users to store thousands of files, including images, audio, and videos. The platform offers quick file retrieval through global search and enables easy bulk upload and download of entire folders. Electronic signature capabilities allow users to review, send, and securely store signed documents. Clio's mobile and desktop accessibility enables users to access, redline, and annotate documents anytime, anywhere, streamlining their workflow.

Contract Analysis and Drafting

Analyzing contracts entails looking at them in detail to learn about their rights, responsibilities, and potential dangers. A lawyer's job is to read over a contract and point out areas where there may be room for interpretation or discussion. The legal ramifications and hazards of entering into or altering a contract can be better assessed with this information. It helps gauge how well you're following the rules, too.

The term "contract drafting" refers to preparing legal documents, such as contracts, to serve as legal protection for customers. When drafting a contract, attorneys utilize their knowledge of the law to ensure that the terms and conditions fairly and correctly represent the parties' objectives and expectations. The purpose is to prevent or resolve any legal problems or ambiguities that may arise with clarity, accuracy, and thoroughness.

In the past, legal firms manually handled contract analysis and writing by going through the following processes:

Several phases are involved in the study and drafting of a contract: The attorney analyzes the contract's objective and the parties involved. To verify conformity, they investigate any questions raised by the contract and any areas where clarification is needed. Lawyers collect information and negotiate conditions in collaboration with clients and other parties involved. Lawyers use their knowledge of the law to hand-create contract stipulations, considering the parties' agreed-upon requirements and any problems detected. Multiple eyes are cast over the prepared contract to ensure its legitimacy and correctness. The final step is for the contract to be reviewed and authorized before it can be signed.

But using GPT, you can do it within a minute.

Legal Research

Gathering and analyzing important legal material, such as legislation, regulations, case law, and precedents, is essential to every law firm's operations. Finding and evaluating relevant primary and secondary legal materials is necessary for providing sound legal advice and representation and bolstering legal arguments.

Primary and secondary legal sources are used in legal studies. Laws, constitutions, administrative rules, and judicial rulings are all examples of primary sources. Treatises, articles in legal periodicals, dictionaries, and encyclopedias are all examples of secondary sources in law. Legal research can benefit from these resources because of the interpretations, analyses, and commentary.

Casetext is an artificial intelligence (AI) based legal research platform that simplifies finding relevant cases for attorneys. Because of Casetext's compatibility with Clio, lawyers may research an issue with a single click without ever leaving the case management software. Casetext also evaluates cases to determine their applicability.

Predictive Analytics

There are several ways in which law firms improve their operations with the help of predictive analytics. Predicting the success or failure of a case is a critical use of this technology. To do this, researchers examine past data to create predictive models. This allows lawyers to determine whether or not a case is worth pursuing, whether to settle, and how to counsel their clients.

Predictive analytics may also help significantly in allocating resources. Law firms can boost productivity and client service by considering aspects including case complexity, time needs, and expected outcomes by optimizing personnel numbers, managing caseloads efficiently, and allocating resources effectively.

To help businesses and law firms develop effective litigation strategies, win cases, and conclude deals, Lex Machina offers Legal Analytics.

Automated Legal Chatbots

Legal chatbots are automated computer programs that employ artificial intelligence and natural language processing to mimic human communication to offer users legal information and advice. Websites, chat applications, and online legal aid venues all provide data access.

The use of automated legal chatbots has many advantages:

- They are easily accessible at any time, day or night, without the need to schedule a meeting with a lawyer or wait for office hours.

- Unlike natural beings, you won't have to wait for a response from a chatbot. Users can obtain prompt answers to their questions and concerns about the law.

- Automated legal chatbots can answer basic legal questions and offer advice at a fraction of the price of hiring a human attorney. This lowers the barrier to entry for people and enterprises of modest means seeking legal counsel.

- Chatbots are efficient because they can process a high number of inquiries in a short amount of time. They can help many users at once and respond consistently even when they are under a heavy load.

- Research and information in the law: chatbots have access to many databases, including anything from legislation and regulations to case law and precedents. Definitions, explanations of legal ideas, and general advice on legal concerns may all be found in these resources.

Gideon is an artificial intelligence (AI) chatbot that can learn to qualify leads and respond to queries from potential customers. Gideon can often replace cumbersome paper intake paperwork with a straightforward discussion.

You can also build a GPT-based chatbot.

Discovery and Due Diligence

To complete their due diligence, lawyers typically go through piles of paperwork like contracts. Legal practitioners may speed up their document review processes using AI, just as with other document-related difficulties. With AI, a due diligence solution may retrieve only the documents it needs, such as those that include a necessary clause.

AI due diligence tools can also detect document alterations or variations. What's even better? Artificial intelligence can quickly scan documents. Lawyers can profit from significantly less time spent reviewing documents manually, while we still advise having a person check the data.

Diligen is a machine learning tool that analyzes contracts for key terms and rapidly generates a summary for attorneys to use during due diligence.

GPT can also help here.

Legal Training and Education

A law firm needs to implement a training and education system to maximize productivity, accuracy, and access to legal information. Legal research, training courses, document drafting support, and team-based education possibilities driven by AI are all available to associates. Associate professional growth, continued knowledge of relevant legal advances, and increased firm-wide efficiency are all enhanced by such a system, allowing the firm to serve its clients better.

Check our guide on e-learning for business for better understanding.

Detecting Deception

As AI continues to advance, its application in the field of law is expanding, including detecting deception. Researchers are currently focusing on developing AI systems that can identify deceitful behavior in the courtroom. In a recent trial, an AI system demonstrated an impressive 92% accuracy rate, surpassing human capabilities. Researchers have acknowledged this remarkable performance as a significant improvement.

Although the integration of AI into courtrooms is still under development, its implementation in other domains has already begun. Several countries, such as the United States, Canada, and the European Union, have initiated pilot programs that utilize deception-detecting kiosks to enhance border security measures. These initiatives highlight the potential of AI to detect deception in various contexts, signaling a promising future for its utilization in the legal field and beyond.

Benefits of using AI and GPT for Law

- Enhanced Productivity: By automating routine, manual tasks such as data entry, document review, or scheduling, AI can free up valuable time for staff to focus on more complex and strategic tasks.

- Improved Decision Making: AI can analyze large amounts of data quickly and accurately, providing insights and predictive analytics that can improve strategic decision-making. For instance, in a legal context, GPT could predict the likely outcomes of litigation based on historical data.

- Cost Savings: Automating routine tasks reduces the time and labor costs associated with these tasks. Moreover, AI can often perform these tasks more accurately, reducing costs associated with errors.

- Improved Customer Service: AI-powered chatbots or virtual assistants can provide 24/7 customer service, answering frequently asked questions and guiding customers through processes. This can increase customer satisfaction and loyalty.

- Personalization: AI can analyze customer data to understand their preferences and behavior, allowing firms to offer personalized services or products, which can enhance the customer experience and increase sales.

- Risk Management: AI can help firms identify and manage risks more effectively. For example, it can identify patterns of fraudulent activity, or flag compliance issues before they become serious problems.

What can Ionio help you with?

Ionio can train custom private LLMs hosted on your systems for privacy-preserving AI in legaltech. So you can automate the daily mundane of your law firm, from Document management to analysis.

Have questions for us? Book a free consultation call to clarify your doubts.

.png)

.png)

.png)