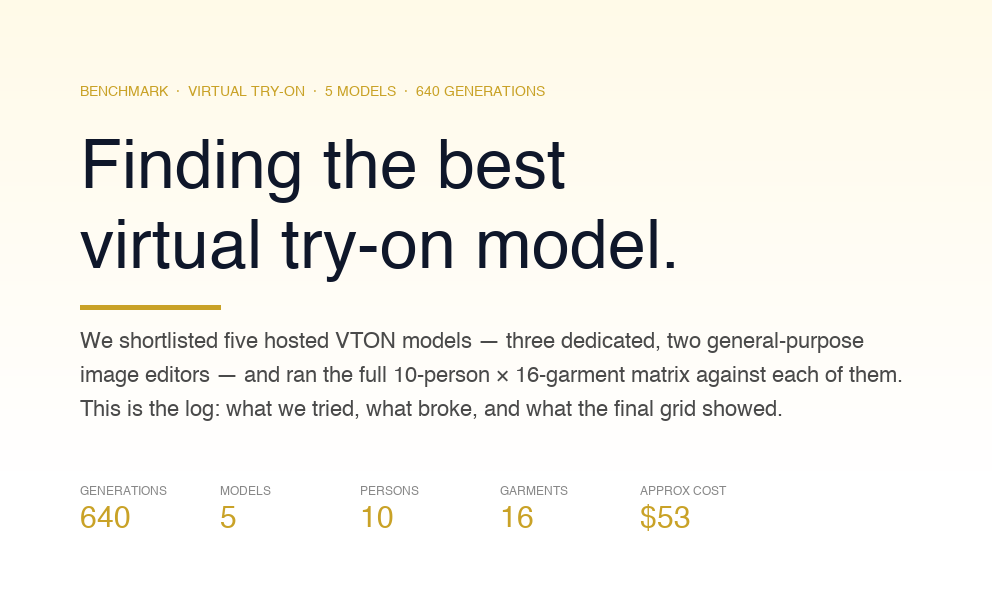

Should code be kept private or "open-source" in the race to produce AI? Meta has given researchers and academics access to their language model, LLaMA, whereas OpenAI has kept data for projects like GPT-4 behind wraps. Recently, rumors have circulated that Meta will "imminently" release a commercial version that can be tailored to individual businesses.

This year, Meta introduced LLaMA, which was a major step forward for OSS LLMs. The v1 models considerably sped up research into generative AI and LLM. However, they are not for commercial use. Using high-quality instruction-following and chat data, Alpaca and Vicuna showed that LLaMA can be finetuned to behave like ChatGPT.

What is actually LLaMA2?

Llama 2 is an open-source big language model developed by Meta, and it will be available on Microsoft's Windows and cloud computing platform Azure thanks to a partnership between the two companies. The two companies made the partnership public on July 18 by announcing that Llama 2 had been optimized for Windows and was available for free for both academic and commercial use.

Source

Researchers and developers can use Llama 2 to conduct studies, experiments, and projects related to natural language processing without licensing fees. Additionally, businesses and organizations can integrate Llama 2 into their products, services, and applications for various commercial purposes.

Large Language Model Meta AI (LLaMA) was the abbreviation used by Meta when describing the initial release of their model in February. The program no longer uses capital letters in its second iteration, dubbed Llama 2.

Azure AI model catalog now includes Llama 2, allowing developers to use it with their cloud-native products for content filtering and security functions. The fact that it can be used locally on Windows means that developers can offer generative AI experiences to clients with minimal disruption to their existing process, regardless of the platform they use. You can get your hands on Llama 2 from AWS, Hugging Face, or any number of other cloud service providers.

LLaMa1 vs LLMa2

Meta has made llama two available in three model sizes (7, 13, and 70 billion parameters) that it has trained and released. Although 40% more data was used to prepare the baseline models, the underlying structure of the models is substantially unchanged from Llama 1 models. Let's compare LLaMa1 And LLaMa2 here-

How was LLaMA2 Trained?

LLMs are first trained on a huge amount of self-supervised data, and then Reinforcement Learning with Human Feedback (RLHF) is used to fine-tune them to match human tastes. However, only a few big companies can make them because they need a lot of computing power.

Some pretrained LLMs operate similarly to closed pretrained competitors, but they are not direct equivalents for closed "product" LLMs, which require costly fine-tuning to assure use and safety. The problem is to find a good balance between making something accessible and keeping it safe and easy to use. AI alignment study can move forward with the help of open and repeatable methods.

RHLF

Reinforcement learning from human feedback (RLHF) is a method that trains a "reward model" straight from human feedback. The model is then used as a reward function to optimise an agent's policy using reinforcement learning (RL) and an optimisation algorithm like Proximal Policy Optimisation. RLHF is used when it's hard to come up with a clear algorithmic answer, but it's easy for humans to judge how good the model's output is. RLHF has been used in different areas of natural language processing, like conversational bots, summarising text, and understanding natural language.

Before optimising the policy, the reward model is trained to guess whether a certain output is good (high) or bad (low). RLHF can make RL agents more sturdy and help them find new things to do, especially when the reward function isn't clear or is noisy.

The most common way to get feedback from people is to ask them to rank examples of the agent's behaviour. Then, a method like Elo can rate the outputs based on these rankings. Even though choice judgement is widely used, other types of human feedback, like numerical feedback, natural language feedback, and edit rate, give more information. By getting rid of bias in LLMs, RLHF can help make models safer and better. It can also make LLMs work better, be more fair, and include more people.

Pretraining

To create the new family of Llama 2 models, the researchers began with the pretraining approach described in Touvron et al. (2023), using an optimized auto-regressive transformer. However, several changes were made to improve performance significantly.

Data Cleaning and Selection

One of the crucial steps in building high-quality language models is to ensure the training data is clean and relevant. For Llama 2 models, the researchers curated a new mix of data from publicly available sources, carefully excluding any data from Meta's products or services to avoid biases or conflicts of interest.

Additionally, they made significant efforts to identify and remove data from certain websites that were known to contain a high volume of personal information about private individuals. This data cleaning process aimed to improve the overall data quality and reduce the potential for biased or sensitive information in the training corpus.

Token Count and Knowledge Enhancement

The amount of data used for pretraining impacts performance of the language model. In the case of Llama 2, the researchers pretrained the models on a massive 2 trillion tokens of data. This decision was likely based on a trade-off between the computational resources required for training and the performance gains achieved with a larger training corpus.

The researchers up-sampled the most factual sources during training to enhance the model's knowledge and reduce false or inaccurate information (hallucinations). By prioritizing more reliable and fact-based data, the model is better equipped to provide accurate responses during generation.

Pretraining Data Investigations

Users and developers of the language model must be aware of the strengths and weaknesses of the pretraining data. So, the experts looked at the pretraining data in different ways to see what it could do and what it couldn't.

During these investigations, data statistics were analysed, data distribution from different sources was looked at, and any biases in the training corpus were found. The results of these studies can be found in Section 4.1 of the publication. This section shows what data was used to train the Llama 2 models.

Training Details

The training of Llama 2 models adopted most of the pretraining settings and model architecture from Llama 1. However, there were some notable differences to improve model performance.

What is The Model Architecture of LLaMA2?

The core architecture of the transformer model, as originally proposed by Vaswani et al. (2017), was utilized. However, to further enhance the model's capabilities, the researchers applied pre-normalization using RMSNorm (Zhang & Sennrich, 2019) and the SwiGLU activation function (Shazeer, 2020). Additionally, they incorporated rotary positional embeddings (RoPE, Su et al., 2022) to handle positional information within the model.

Architectural Enhancements

Two significant architectural enhancements were made to the Llama 2 models:

Increased Context Length

The context length, which refers to the number of tokens considered input for the model's prediction, was doubled compared to Llama 1 models. This improvement allows the model to have a broader view of the input sequence and capture longer-range dependencies, leading to better contextual understanding and generation of responses.

Grouped-Query Attention (GQA)

The researchers introduced a new attention mechanism called grouped-query attention (GQA). GQA is designed to improve the scalability of inference, especially for larger models like Llama 2. This enhancement helps the model process queries more efficiently, leading to a faster and more effective generation of responses during inference.

Ablation Experiments

The researchers conducted ablation experiments to demonstrate the architectural enhancements' importance and impact. Ablation experiments involve removing or modifying specific components of the model architecture to understand their contributions to the overall performance. These experiments likely provided valuable insights into how each architectural change affected the model's capabilities and performance.

How was the LLaMA2 Finetuned?

Each sample in the fine-tuning process consists of a prompt and an answer. All the prompts and responses from the training set are concatenated using a specific token to distinguish the prompt and answer segments to guarantee the model's sequence length is suitably filled. The loss of tickets from the user prompt is wiped out using an autoregressive goal. As a result, backpropagation is limited to answer tokens. They fine-tune the model for 2 epochs.

Reinforcement Learning with Human

RLHF is a model training process used to align further a fine-tuned language model's behavior with human preferences and instruction following. This entails gathering data representing empirically sampled human preferences, with human annotators selecting their favorite alternative from two model outputs. The human feedback is then used to train a reward model, which learns patterns in human annotators' preferences and can automate preference decisions.

Data Collection on Human

A binary comparison approach is used to acquire human preference data for reward modeling. Annotators are given two sampled model responses depending on the criteria supplied and asked to pick between them. To maximize diversity, answers are drawn from two separate model variations, each with a different temperature hyper-parameter. Annotators also identify the degree to which they prefer the chosen response over the alternative. The primary goal is to gather preference data for the "helpfulness" and "safety" components of Llama 2-Chat answers.

Reward Modelling

The reward model takes a model response and its related prompt (including contexts from previous turns) as inputs and returns a scalar score representing the quality of the model generation, such as helpfulness and safety. Llama 2-Chat is optimized through RLHF for better human preference alignment, higher helpfulness, and enhanced security in its responses by using these response scores as rewards.

Data Distribution and Progressive Improvement

On a weekly basis, human annotations were collected in batches. The reward models improve as more preference data is collected, resulting in improved alignment with human preferences. However, this enhancement may cause the model's data distribution to shift. To ensure an accurate reward model, new preference data is obtained before each new tuning iteration utilizing the most recent Llama 2-Chat iterations. This phase guarantees that the reward model remains on-distribution and that the most current version of the model produces an accurate reward signal.

Reward Modelling Data Statistics

The researchers report the statistics of the reward modeling data acquired over time. Compared to previous open-source datasets, the dataset comprises over 1 million binary comparisons based on human preferences, with more conversation turns and more extended responses on average.

Is LlaMA2 Bias Free?

The researchers agree that there are still problems with bias, toxic comments, and hallucinations in big language models and that LLaMA is no exception. LLaMA is a basic model that can be used in a wide range of use cases, but it also faces the same problems as other models in this area.

The flexibility of LLaMA makes it more valuable than models that are fine-tuned for specific jobs. Researchers have made the code for LLaMA public so that people can work together on the study and solve the abovementioned problems. By sharing the code, other researchers can try out new ways to fix or get rid of problems like bias, toxicity, and hallucinations in big language models more easily.

The paper includes a set of evaluations on benchmarks that measure the model's biases and toxicity. These evaluations help figure out what the model cannot do and add to more research in this important area. The goal of these evaluations is to put light on how well the model works and where it falls short. This will help the research community find ways to improve and move forward in tackling these important problems.

Researchers hope to progress in addressing the risks and limitations of big language models like LLaMA by encouraging transparency and making it easier for people to work together. The end goal is to make AI language models safer, more responsible, and ethically sound so that they can be used in a wide range of real-world situations.

LLaMA2: Where did GPT4 and PaLM outrank LLaMA?

Meta's publication admits that Llama 2 is weaker than GPT-4, as Percy Liang, director of Stanford University's Centre for Research on Foundation Models, pointed out. Compared to GPT-4 and PaLM 2, the Llama 2 model likewise falls short.

Lower Number of 'Tokens' Used in Training

'Tokens' refer to the text used to train a generative AI model. 'Bat' and 'cool' are two common examples of tokens. When preparing an AI model, more tokens are always better. As reported by TechCrunch, more tokens than the 1.4 trillion used to train the original Llama were used to train Llama 2. Its main competitor, Google's PaLM 2, was reportedly trained on 3.6 trillion tokens, as reported by CNBC in May.

Language Assistance

Compared to Google's PaLM 2 and OpenAI's GPT-4, which both support 100 languages, Meta's Llama 2 only supports 20. Google looks to have the upper hand here. Using the PaLM 2 approach, Google Bard can now translate between nine different Indian languages (Hindi, Tamil, Telugu, Bengali, Kannada, Malayalam, Marathi, Gujarati, and Urdu). More than 40 languages, including Bahasa Indonesia, are currently supported by Google for Bard.

Falcon vs LLaMa2

Two of the most discussed lLLMs are Falcon and LLaMA. We'll look at how the two models stack up in terms of size, data used in training, capabilities, and overall performance.

Need help with AI integration?

Planning to integrate AI to your workflow? Or building your own model with LLaMA2? Ionio can help you to skip the guess work. Schedule a call withour CEO, Rohan today!

.png)

.png)