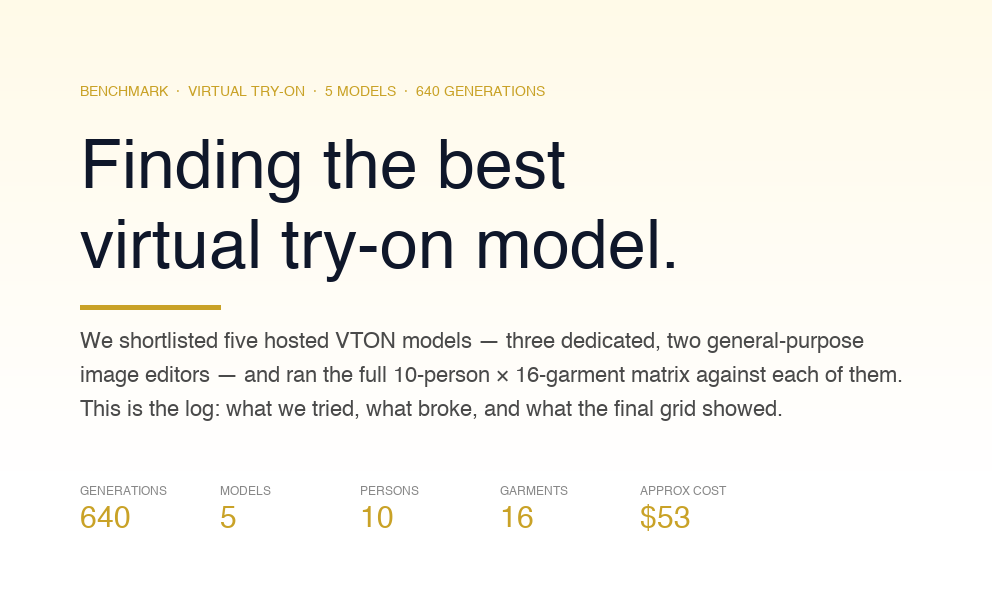

Generative AI models have revolutionized the way we approach creativity, design, and problem-solving in the digital age. Unlike discriminative models that classify input data, generative models can create new data instances. These AI systems learn to model the distribution of individual classes of data, allowing them to generate new examples that could plausibly come from the actual dataset.

There are 4 types of Generative models:

.png)

- Generative Adversarial Networks (GANs): GANs involve two neural networks, a generator and a discriminator, which are trained simultaneously. The generator produces samples aimed at passing for real data, while the discriminator tries to distinguish between actual and generated data.

- Variational Autoencoders (VAEs): VAEs are based on a probabilistic framework that learns a latent space representation of the input data. They reconstruct the input data by learning the distribution parameters.

- Flow Based Models: A flow-based generative model is a type of machine learning model designed to create complex data distributions from simpler ones. It does this by applying a series of transformations, known as normalizing flows, which are mathematically invertible. This approach allows for both direct sampling of data and exact likelihood computation, making it a powerful tool for tasks that require understanding and generating high-dimensional data.

- Diffusion Models: Diffusion models are a type of generative model that simulates the gradual process of diffusion, or the spreading of particles from regions of higher concentration to lower concentration, to generate new data samples. This process involves a forward diffusion phase that gradually adds noise to the data, transforming it into a Gaussian distribution, followed by a reverse diffusion phase that carefully removes noise to construct new data samples.

What are Diffusion Models?

Diffusion models are advanced generative artificial intelligence algorithms designed to generate structured data, such as images or text, from a state of randomness. These models operate by meticulously learning to invert a process that introduces noise into data, thereby "denoising" or reconstructing detailed and coherent outputs from an initial noise distribution. Through a controlled process of gradually reducing noise, diffusion models can synthesize realistic and complex data samples, showcasing their ability to bridge the gap between chaos and order in data generation.

In simpler terms, think of diffusion models as master restorers of art, where the canvas is your data, and the noise is years of dust and grime. They begin their task by deliberately obscuring the artwork with noise, only to demonstrate their prowess by meticulously removing every speck, revealing the masterpiece underneath. This process is not just a show of skill but the very method through which they learn. By training on noise-added images, diffusion models learn the art of reversal, applying this knowledge to transform random noise into coherent, realistic images. Imagine spraying perfume in a room; initially, its scent is concentrated, but over time it diffuses, spreading evenly throughout the space. Diffusion models capture this natural essence, starting with a simple, uniform distribution and gradually evolving it into complex, detailed creations that mirror the intricate diversity of the world around us.

Unlike other generative models like GANs or VAEs, diffusion models excel in generating high-quality samples with intricate details. They operate through a series of learnable noise reduction steps, making them uniquely suited for tasks requiring high fidelity and coherence.

The Importance of Diffusion Models

The allure of diffusion models lies in their ability to produce remarkably realistic and diverse outputs. Their success in generating high-quality images, audio, and even text has positioned them as a significant advancement in generative AI.

One of the key advantages of diffusion models is their stability in training compared to GANs, which are known for their training difficulties. Additionally, diffusion models offer a high degree of control over the generation process, enabling the creation of conditioned samples with unprecedented detail and realism.

Basic architecture and components

.png)

Forward Diffusion Process

.png)

The forward diffusion process is the initial phase where the model gradually adds noise to the original data over a series of steps, transforming it into a nearly unrecognizable state. The forward diffusion process systematically degrades the data by adding Gaussian noise over a series of steps, transforming the original data into a state of pure noise. This process is modeled as a Markov chain, implying that each step depends only on the previous step, ensuring simplicity and tractability. Mathematically, given a data point x₀ sampled from the data distribution, the forward diffusion process at each step t can be described as

xₜ = √(1 - βₜ) xₜ₋₁ + √βₜϵ

where ϵ is the noise added at each step, and βₜ is the variance of the noise. The reparameterization trick allows us to express the noisy data xₜ directly in terms of the original data x₀ and the noise, enabling the model to learn how to reverse this noising process effectively.

Connection with Stochastic Gradient Langevin Dynamics

The methodology behind diffusion models draws inspiration from Stochastic Gradient Langevin Dynamics (SGLD), a technique from the realm of Bayesian machine learning. SGLD is utilized for sampling from the posterior distribution of a dataset by adding noise to the gradient updates during training. In the context of diffusion models, this concept is mirrored in the way noise is incrementally introduced and later reversed, highlighting a deep-rooted connection between diffusion processes and stochastic optimization methods.

Reverse Diffusion Process

.png)

Following the forward diffusion, the reverse diffusion process embarks on a meticulous journey to recover the original data from its noise-embedded version. This phase involves a series of denoising steps, each carefully designed to incrementally reduce the noise level and restore the data's original structure. The reverse process is guided by a learned denoising function, which effectively predicts the original data distribution from the noisy input. This denoising function is at the core of the model's generative capabilities, enabling the synthesis of new, realistic samples from random noise.

Simplifications and Mathematical Foundations

Ultimately, the architecture of diffusion models, with its reliance on reversing the noise addition using learned denoising steps, enables them to generate high-quality data samples from complex distributions. To illustrate this with a mathematical example, let's consider a simple data distribution represented by a Gaussian distribution with a mean (μ) and variance (βₜ).

In the forward process, Gaussian noise is added to the data point x₀ to create a sequence of increasingly noisy data points x₁, x₂, ..., xₜ, where each xᵢ represents the data point after i steps of noise addition. The variance of the noise added at each step is determined by a variance schedule β₁, β₂, ..., βₜ, which can be linear, quadratic, or cosine, for instance.

Using the reparameterization trick, we can express xᵢ directly in terms of x₀ and Gaussian noise ϵ, which simplifies sampling and calculations:

xᵢ = √(1 - βᵢ) x₀ + √βᵢϵ

In the reverse process, the model learns to estimate the noise ϵ that was added at each step and remove it to recover the original data point from the noisy data point. The model does this by predicting the parameters of the Gaussian distribution at each reverse step, starting with xₜ (which is almost pure noise) and progressing backward to x₀.

For example, if our original data point x₀ is sampled from a Gaussian distribution with mean 0 and variance 1, and we use a variance schedule where β₁ is very small (e.g., 0.01), the first noisy data point x₁ would be very close to x₀ but with a little noise added. If the model learns the reverse diffusion process well, it can start with x₁ and accurately predict the noise that was added to return to the original data point x₀.

Training of Diffusion Models

Training diffusion models involves teaching a neural network to reverse a diffusion process, which is essentially a process of adding noise to data. The goal is to start with a complex data distribution and learn to generate samples from this distribution by reversing the noising process.

Parameterization of the Diffusion Process

The diffusion process is parameterized by defining how noise is added at each step. Typically, Gaussian noise is added incrementally in a way that can be precisely controlled and reversed. The parameters of this noise addition, such as the variance schedule of the noise, are crucial as they define the trajectory of the forward diffusion process.

Training Loss and Optimization

The training loss for diffusion models is usually defined in terms of the difference between the original data and the data that has been denoised by the model. The model learns to minimize this loss through backpropagation and optimization algorithms such as stochastic gradient descent. This process requires careful balancing of the noise levels to ensure that the model learns to create high-fidelity samples.

Connection with Noise-Conditioned Score Networks (NCSN)

Noise-Conditioned Score Networks are a class of neural networks used within the diffusion model framework. They are trained to estimate the gradient of the log probability density (the score) of the data given different noise levels. Essentially, they learn to predict the direction in which to update the data to make it more likely under the model's current understanding of the data distribution. The connection with diffusion models comes from the fact that NCSN can be used to guide the denoising process, conditioning the reverse diffusion on the noise level at each step.

These components work together to enable diffusion models to generate new data that is similar to the training data. The process involves a complex interaction between the forward diffusion process, the architecture of the neural network, and the optimization process.

!https://yang-song.net/assets/img/score/cifar10_large.gif

Speeding up Diffusion Model Sampling

Speeding up diffusion model sampling involves several strategies to enhance the efficiency of generating new samples. One approach is to reduce the number of steps in the reverse diffusion process without compromising the quality of generated samples. Techniques like learning-based step-size scheduling, where the model dynamically adjusts the step sizes during sampling, can significantly reduce computation time. Another method involves using more powerful neural network architectures that can more effectively learn the distribution of data, allowing for faster convergence. Additionally, parallel computing and optimization of algorithmic implementations can also contribute to speed enhancements. These advancements aim to make diffusion models more practical for real-world applications by decreasing the time required to generate new, high-quality samples.

Classifier Guided Diffusion (CGD)

Classifier Guided Diffusion (CGD) involves enhancing the generation process of diffusion models with guidance from a classifier. In this setup, a pre-trained classifier is used to steer the generation towards more realistic or specific outcomes by amplifying the gradients from the classifier. This is achieved by adjusting the guidance scale, which controls the influence of the classifier on the generation process. The higher the guidance scale, the more the generation process is influenced by the classifier, often leading to higher quality and more precise outputs.

Classifier-Free Guidance

Classifier-Free Guidance (CFG) is a technique that enables conditioning a diffusion model without a separate classifier. It involves training a single model that can operate both conditionally and unconditionally. During training, the model sometimes receives no conditioning information, which allows it to learn an unconditional distribution. During sampling, the model's output can be modulated to either follow the conditional distribution closely or generate more diverse outputs. This modulation is controlled by a guidance scale similar to CGD but doesn't require an external classifier.

Denoising Diffusion Probabilistic Models (DDPMs)

DDPMs are a class of generative models that simulate the process of gradually adding noise to data until it becomes pure noise, and then learning to reverse this process to generate data from noise. Technically, DDPMs operate in two phases: the forward (noising) phase and the backward (denoising) phase.

- Forward Phase: This involves gradually adding Gaussian noise to the data through a predefined number of steps, transforming the data into a distribution that is close to Gaussian noise. This process is described by a Markov chain where the transition probabilities at each step are known and involve adding a small amount of noise at each step.

- Backward Phase: The backward phase is where the model learns to reverse the noising process. This involves training a neural network to predict the noise that was added at each step of the forward process, essentially learning to denoise the data. The model uses this learned denoising process to generate data starting from pure noise, iteratively refining the data through the reverse of the forward Markov chain.

The key to DDPMs is the iterative refinement process during generation, which uses a learned gradient of the data log-likelihood with respect to the data itself. This process is guided by the Bayes' rule, leveraging the forward process's known transition probabilities to inform the reverse generation process.

Denoising Diffusion Implicit Models (DDIMs)

DDIMs are a variant of DDPMs that allow for non-Markovian (implicit) generative processes. While DDPMs use a fixed variance schedule for the noise added at each step, DDIMs introduce flexibility in the denoising process, enabling more direct control over the trajectory of the denoising path. This results in several practical benefits:

- Faster Sampling: DDIMs can generate samples in fewer steps than traditional DDPMs by taking larger, more informed steps in the denoising process. This is achieved by modifying the variance schedule or by implicitly defining the denoising steps without strictly adhering to the Gaussian noise assumption.

- Deterministic Sampling: Unlike DDPMs, which inherently involve randomness in the sampling process due to the stochastic nature of the reverse process, DDIMs can offer deterministic sampling paths. This is because the denoising process in DDIMs can be designed to be deterministic, offering a unique denoising path for each noise level.

- Controlled Generation: The implicit nature of the denoising steps in DDIMs allows for more nuanced control over the generation process. This can be leveraged for tasks such as controlled image generation, interpolation between samples, and even conditional generation with fewer artifacts.

Getting Hands-on with Diffusion Models

Environment Setup

First, ensure the necessary libraries are installed. This includes diffusers[training] for training diffusion models, huggingface_hub for sharing models, and datasets for data handling. Additionally, install torchvision for image transformations and torch for model training and operations.

Data Preparation

Load the Smithsonian Butterflies dataset using the datasets library. Preprocess images to a uniform size and apply augmentations like random horizontal flips and normalization.

Model and Scheduler Setup

Initialize a UNet2DModel with specified architecture parameters. Use a diffusion scheduler, such as DDPMScheduler, to manage the noise levels during training.

Training Loop

Define the training loop, including noise addition, model optimization, and evaluation. Utilize the Accelerate library for efficient multi-GPU training.

Model Evaluation and Saving

After training, generate and save images to evaluate the model's performance visually. Optionally, push the trained model to Hugging Face Hub for sharing.

This walkthrough provides a foundational understanding of training diffusion models on custom datasets. For more detailed information and advanced techniques, refer to the Hugging Face Diffusers documentation and tutorials.

Some popular diffusion models

Stable Diffusion

Stable Diffusion, a cutting-edge text-to-image model, excels at generating highly detailed and diverse images based on textual descriptions. Its capability spans a broad spectrum of styles, from photorealistic to artistic renditions, enabling users to create images that closely align with their envisioned descriptions. This technology has opened new avenues for creativity and design, offering unparalleled flexibility in image generation.

The core principle behind Stable Diffusion and latent diffusion models, in general, involves a two-step process: encoding and diffusion. Initially, an input image is encoded into a lower-dimensional latent space using an encoder network. This approach significantly reduces the computational complexity compared to direct high-dimensional image processing. The latent representation is then processed through a diffusion model, typically a U-Net architecture, which gradually adds noise to the data over a series of steps, effectively 'diffusing' the original information.

.png)

During the reverse process, the model learns to generate new data by reversing the noise addition, gradually 'denoising' random noise into coherent images. This is where the textual description plays a crucial role, guiding the denoising process to form images that match the given text. The final step involves a decoder network, which upscales the generated latent representation back into a high-resolution image.

The efficiency of latent diffusion models like Stable Diffusion lies in their ability to operate in this compressed latent space, making the training and generation processes more computationally feasible. Moreover, the flexibility of the model is enhanced by its ability to be conditioned on textual descriptions, enabling the generation of images that are closely aligned with the user's intent.

- Performance: Designed for high performance, it can generate high-quality images quickly, even on consumer-grade hardware.

- Customizability: The open-source model encourages experimentation and fine-tuning, making it a favorite among researchers and hobbyists for creating custom applications.

DALL-E2

DALL-E, a model developed by OpenAI for generating images from textual descriptions. It significantly improves upon the original model in terms of image quality, resolution, and the model's ability to capture and render nuanced details from textual prompts. The improvements in DALL-E 2 can be attributed to a few key advancements:

- CLIP-guided Processes: DALL-E 2 integrates insights from CLIP (Contrastive Language–Image Pre-training), another OpenAI model designed to understand images in the context of natural language. This integration allows DALL-E 2 to generate images that are more closely aligned with the textual descriptions, capturing subtleties and nuances that were previously challenging.

- Hierarchical Representation: DALL-E 2 uses a hierarchical approach to image generation, starting from a broad layout and progressively adding details. This allows the model to maintain coherence over larger images and produce high-resolution outputs.

- Diffusion Techniques: Leveraging improved diffusion techniques, DALL-E 2 can generate images with finer details and textures. These techniques also help in reducing artifacts and improving the realism of generated images.

GLIDE

GLIDE (Guided Language to Image Diffusion for Generation and Editing) is another model that generates images from textual descriptions. Developed by OpenAI, it combines the strengths of CLIP with diffusion models to produce highly detailed and accurate images based on textual prompts. Features include:

- Text-conditional Image Generation: Generates images that are closely aligned with complex textual descriptions, capturing both the overall theme and specific details mentioned in the text.

- Image Editing: Can perform targeted edits on existing images based on textual prompts, enabling users to modify or add elements to an image in a contextually coherent manner.

Imagic

Imagic, developed by researchers at Google, is designed to edit images in a semantically meaningful way based on textual prompts. It uses a diffusion model trained to understand both the content of the images and the intent behind the textual prompts, enabling precise and context-aware image manipulations. Key features include:

- Text-driven Image Manipulation: Allows users to specify edits in natural language, which the model interprets to apply changes directly to the image, preserving the original style and context.

- Fine-grained Control: Provides detailed control over the editing process, enabling complex changes that reflect the subtleties of the input text.

References and Further Reading

- What are Diffusion Models?

- How diffusion models work: the math from scratch

- Introduction to Diffusion Models for Machine Learning

- Diffusion Models: A Comprehensive Survey of Methods and Applications

- Denoising Diffusion Probabilistic Models

Elevate Your Business with Generative AI

Dive into the future with our Generative AI and Diffusion technologies! Whether it's creating stunning visuals, enriching content, or innovating product designs, our expertise is your gateway to leveraging the power of AI. Understandably, the thought of integrating such advanced AI might seem daunting.

That's why our CEO, Rohan, is here to simplify it for you with a consulting session. Discover how these cutting-edge AI solutions can transform your business landscape effortlessly.

Ready to innovate? Book your free consultation now and unlock new possibilities with Generative AI.

.png)